Matrices

Definition

A matrix is a rectangular array of numbers, symbols, or expressions arranged in rows and columns. Formally, an matrix has rows and columns:

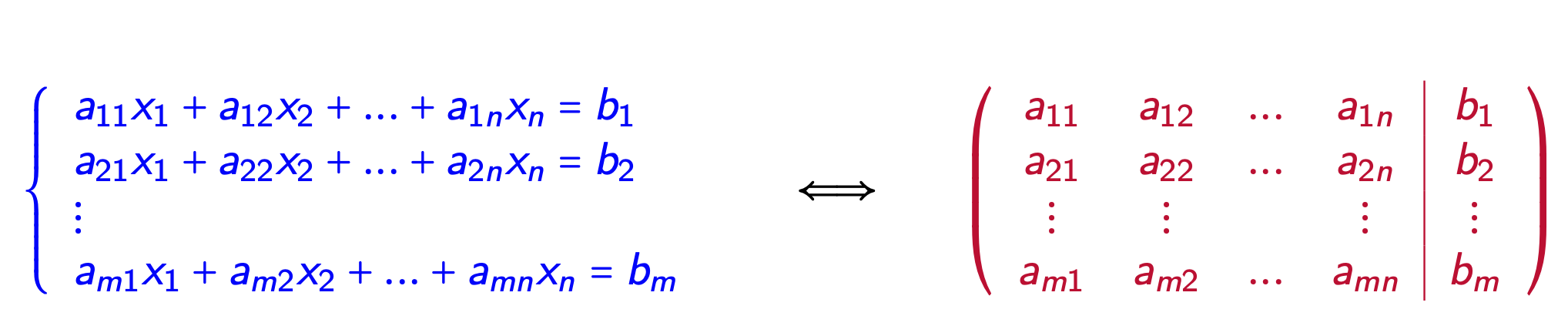

Augmented Matrices as Systems of Linear Equations

Types of Matrices

- Square Matrix: A matrix with the same number of rows and columns ()

- Identity Matrix: A square matrix with 1's on the main diagonal and 0's elsewhere

- Augmented Matrix: A matrix used to represent a system of linear equations

- Zero Matrix: A matrix with all elements equal to zero

- Diagonal Matrix: A square matrix with non-zero elements only on the main diagonal

- Triangular Matrix:

- Upper triangular: non-zero elements only on and above the main diagonal

- Lower triangular: non-zero elements only on and below the main diagonal

- Symmetric Matrix: A square matrix equal to its transpose ()

Matrix Operations

Addition and Subtraction

For matrices and of the same dimensions:

Scalar Multiplication

For a scalar and matrix :

Matrix Multiplication

For an matrix and an matrix :

Transpose

The transpose of an matrix is the matrix where:

Properties and Applications

Determinant

For a square matrix, the determinant is a scalar value that provides information about the matrix's invertibility and the volume scaling factor of the linear transformation it represents.

Inverse

A square matrix has an inverse if , where is the identity matrix.

Rank

The rank of a matrix is the dimension of the vector space generated by its columns (or rows).

Eigenvalues and Eigenvectors

For a square matrix , a non-zero vector is an eigenvector with eigenvalue if .

Applications

Systems of Linear Equations: Matrices provide a compact way to represent and solve systems using methods like Gaussian elimination and Row-Reduced Echelon Form.

Linear Transformations: Matrices represent linear transformations between vector spaces.

Computer Graphics: Transformation matrices are used for rotation, scaling, and translation in 2D and 3D graphics.

Data Science: Matrices are fundamental in techniques like Principal Component Analysis (PCA) and Linear Regression.

Quantum Mechanics: Matrices represent observables and transformations in quantum systems.

Computational Methods

Various algorithms exist for matrix operations, including:

- LU Decomposition

- QR Factorization

- Singular Value Decomposition (SVD)

- Eigenvalue Decomposition